Emble: Empowering Educators with Seamless Course Design Tools

The Problem

Most educators at RMIT weren't designers and shouldn't have needed to be — but available tools forced them to either spend hours on visual formatting or ship courses that looked inconsistent and unprofessional. Off-the-shelf products lacked flexibility for RMIT's specific brand and accessibility requirements.

The real cost wasn't aesthetic — it was educator time diverted from teaching, and student cognitive load from inconsistent layouts across courses.

My Approach

I began with a comprehensive audit of the existing educator experience, conducting user interviews and observational research to identify where the pain was concentrated. The insight that shaped everything: educators didn't need a powerful design tool — they needed a constrained one. Too much flexibility was itself the problem.

I chose to focus research on the 20% of design decisions that caused 80% of inconsistency, then worked with the design team to build opinionated assets that made the right choice the easy choice. I used iterative testing cycles with educators throughout, validating that each asset reduced rather than added cognitive load.

The Work

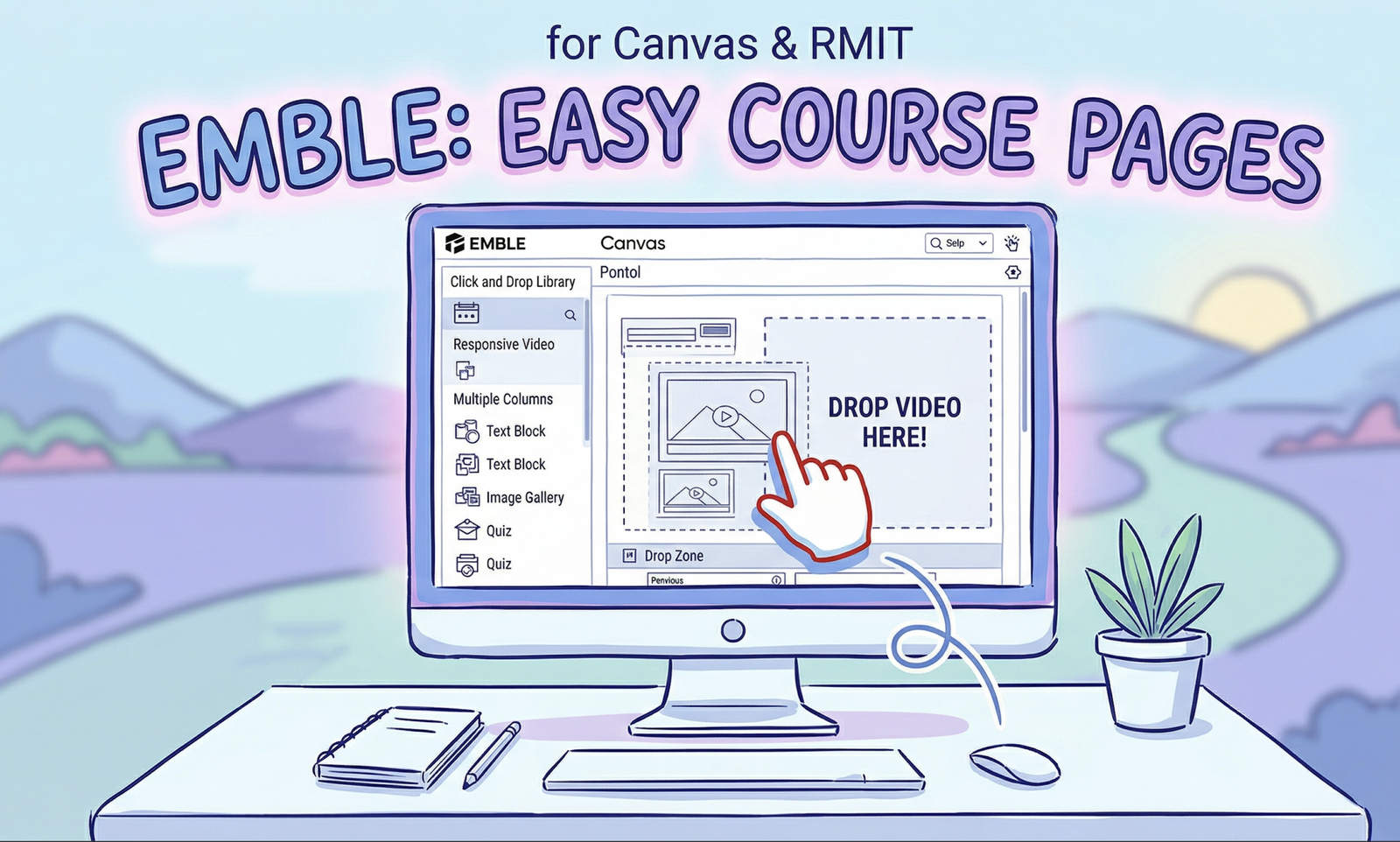

The result was Emble — a bespoke suite of visual assets (banners, columns, callout boxes, dividers) designed specifically for RMIT's brand and accessibility standards. Assets were modular: single elements, grouped elements, and complete page layouts.

Key design decisions: constraining colour choices to RMIT-approved palettes, building accessibility compliance into every asset so educators couldn't accidentally create inaccessible content, and allowing colleges to customise asset availability for their specific contexts.

Evidence of Impact

Emble raised the visual design floor across RMIT's Canvas courses, improving consistency and accessibility system-wide. Educators reported significantly faster course production times. The tool supported rollout of new programs including the Bachelor of Business Innovation and Enterprise by providing tailored assets aligned with program requirements.

What I'd Do Differently

I'd instrument the tool earlier — tracking which assets educators actually used vs. ignored would have accelerated the second iteration significantly. I'd also have pushed harder for a quantitative baseline on course production time before launch so we could measure the before/after more rigorously.