Qualitative Analysis of RMIT Course Experience Survey Data

The Problem

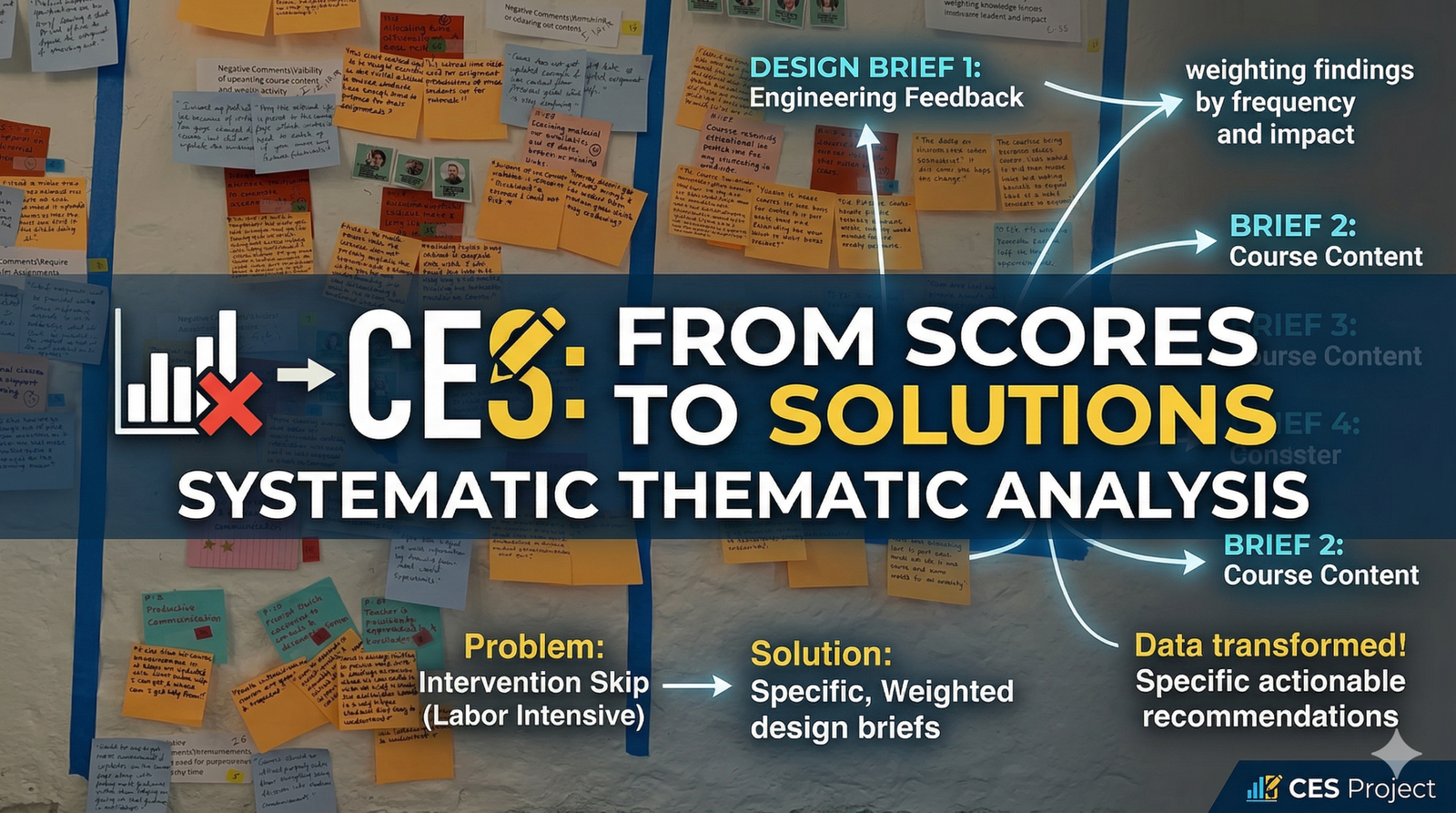

The CES scores told RMIT that students were dissatisfied with assessment methods in certain courses – but not why, and not what to change. Qualitative analysis of open-response data is labour-intensive, which is why it was being skipped. The result was that interventions were generic ('improve feedback') when they needed to be specific ('engineering students receive written feedback too late to act on before the next assessment').

The case for systematic qualitative analysis was that it transforms 'satisfaction scores' into 'design briefs.'

My Approach

I categorised collected data into themes (course content, teaching quality, assessment methods, learning resources), then assigned codes using a mix of predetermined and emergent coding — a standard qualitative research approach but applied systematically across a large dataset.

I conducted a deep analysis of coded data examining recurring themes, emerging patterns, and notable outliers. Rather than treating all feedback equally, I weighted findings by frequency and impact — surfacing the issues affecting the most students most severely.

The Work

Delivered a comprehensive analysis report covering multiple courses and faculties. Report structure: executive summary for leadership (key findings in 5 minutes), detailed thematic analysis for coordinators, and specific actionable recommendations mapped to each finding. Presented findings to course coordinators, faculty members, and university administration.

Evidence of Impact

Provided RMIT's Education Portfolio with a clearer understanding of student experiences, enabling targeted interventions. Findings informed several strategic decisions including changes to assessment timing, teaching methods, and learning resource formats across the portfolio.

📄 Download CES Analysis ReportWhat I'd Do Differently

I'd automate the initial coding pass using NLP tooling and spend the human analysis time on interpretation and edge cases – first-pass coding of 100+ survey responses by hand was the least defensible use of researcher time. I'd also build the reporting template upfront so findings map directly onto decision points rather than being reformatted after analysis is complete.